- 21 September 2017

- Benny Har-Even

For many, PowerVR is closely associated with graphics technology, from the early days of desktop PC gaming to arcade consoles, and later to home consoles and then, of course, to mobile. Indeed, we’ve recently celebrated our 25th anniversary in a series of blog posts. However, PowerVR also includes a range of other IP for vision and AI applications.

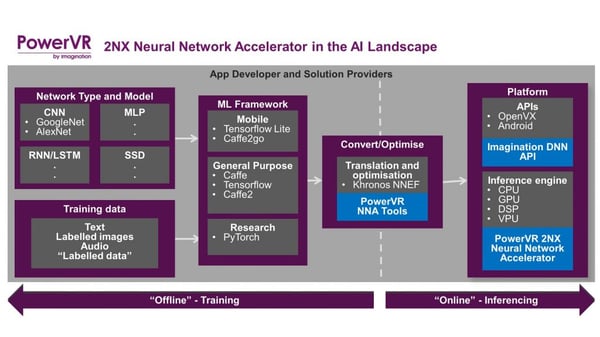

This week, PowerVR is adding to its proud history with the addition of an entirely new category of IP – a hardware neural network accelerator: introducing the PowerVR Series2NX. We have dubbed this a Neural Network Accelerator (NNA), offering a full hardware solution built from the ground up to support many neural net models and architectures as well as machine learning frameworks, such as Google’s TensorFlow and Caffe, at an industry-leading level of performance and low power consumption.

Neural networks are, of course, becoming ever more prevalent and are found in a wide variety of markets. They tailor your social media feed to match your interests, enhance your photos to make your pictures look better, and power the feature detection and eye tracking in AR/VR headsets. They can be used for security, offering improved facial recognition and crowd behaviour analysis in smart surveillance and are smarter than humans at detecting fraud detection in online payments systems. Neural nets are going to power the driverless car systems that are coming down the road and the collision avoidance and subject tracking in the drones that will one day deliver our parcels. Another use that just recently came to wide attention is building up a picture of a user’s face for unlocking a mobile phone.

Hardware is the way forward

To do their work, neural networks need to be trained, and this is usually done ‘offline’ on powerful server hardware. The recognising of patterns of objects is known as inferencing, and this is done in real time.

Of course, the bigger the network, the larger the computational needs, and this requires new levels of performance, especially in mobile use cases. While a neural network-based inference engine can be run on a CPU, they are typically deployed on GPUs to take advantage of their highly parallel design, which processes neural nets at orders of magnitude faster. However, to enable the next-generation of performance within strict power budgets, dedicated hardware for accelerating neural network inference is required.

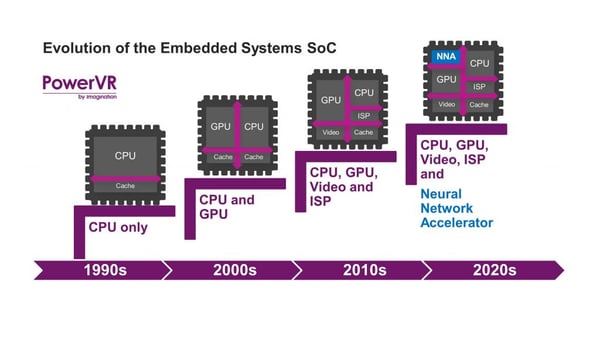

This is a natural evolution for hardware. Originally, the early desktop processors didn’t even have a maths co-processor for accelerating the floating point calculations on which applications such as games are so dependent, but since the 1980s it has been a standard part of the CPU. In the 1990s, CPUs gained their own onboard memory cache to boost performance, while later GPUs were integrated. This was followed in the 2010s by ISPs and hardware support for codecs for smooth video playback. Now it’s the turn of neural networks to get their own optimised silicon.

Keeping it local

In many cases, the inferencing could be run on powerful hardware in the cloud, but for many reasons it’s now time to move this to edge devices. Where fast response is required, it’s simply not practical to run neural networks over the network due to latency issues. Moving it on-device also eliminates the security issues that could occur. As cellular networks may not always be available, be they 3G, 4G or 5G, dedicated local hardware will be more reliable, as well as offering greater performance and, crucially, much-reduced power consumption.

Drones are one example of a technology that will make use of neural network hardware acceleration for fast and efficient collision detection

To take one example, a drone typically flies at speeds in excess of 150mph or 67 metres/sec. Without hardware, it would need to anticipate obstacles 10-15m ahead to avoid a collision, but because of latency, bandwidth and network availability, it’s impossible to do this over the cloud. With a true hardware solution, such as the PowerVR Series2NX, the drone can run multiple neural networks to identify and track objects simultaneously at only 1m distance. If we want our parcels to come to us via drone or want to see new unique camera angles in our favourite sports, neural network hardware assistance will be essential.

These days our smartphones are repositories of our pictures, and typically there might be 1,000 or more photos on the device which are sorted for us automatically in a variety of ways, including, for example, identifying all photos with a particular person in them. This requires analysis; a premium GPU running a neural net could process in around 60 seconds – but the PowerVR Series2NX can do it in just two seconds.

The PowerVR Series2NX will enable very high-speed image processing for mobile with low power consumption

Then there’s battery life. A GPU could process around 2,400 pictures using 1% of the battery power. Conversely, consuming the same amount of power, the Series2NX will handle 428,000 images, demonstrating its leadership with the highest inference/mW in the industry.

This low power consumption can enable new use cases, for example, in areas such as smart surveillance. The Series2NX is powerful enough to perform on-device analytics, whether in a camera in a city centre, a stadium or a home security system. Due to the Series2NX’s ability to run multiple different network types, it can enable more intelligent decision making, thereby reducing false positives. Thanks to the low-power processing, these cameras can now be battery powered, making them easier to deploy and manage.

Flexible bit-depth support

To make these use cases possible, the Series2NX NNA has been designed from the ground-up for efficient neural network inferencing. So what makes our Series2NX hardware different from other neural network solutions, such as DSPs and GPUs?

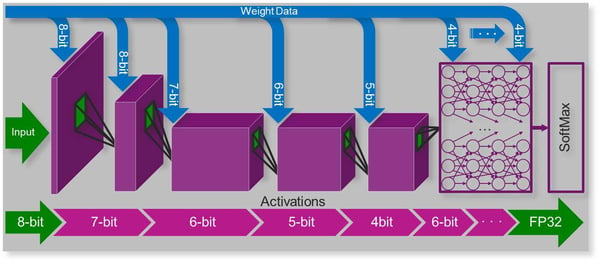

First, the Series2NX’s ultra-low power consumption characteristics leverage our expertise in designing for mobile platforms. The second factor is our flexible bit-depth support, available crucially, on a per-layer basis. A neural network is commonly trained at full 32-bit precision, but doing so for interference would require a very large amount of bandwidth and consume a lot of power, which would be prohibitive within mobile power envelopes; i.e. even if you had the performance to run it, your battery life would take a huge hit.

To deal with this, the Series2NX offers variation of the bit-depth for both weights and data, making it possible to maintain high inference accuracy, while drastically reducing bandwidth requirements, with a resultant dramatic reduction in power requirements. Our hardware is the only solution on the market to support bit-depths from 16-bit (as required for use cases which mandate it, such as automotive), down to 4-bit.

Example implementation of variable weights and precision in the Series2NX Neural Network Accelerator

Unlike other solutions, however, we do not apply a blunt brute force approach to this reduced bit depth. We can vary it on a per-layer basis for both weights and data, so developers can fully optimise the performance of their networks. Furthermore, we maintain precision internally to maintain accuracy. The result is higher performance at lower bandwidth and power.

In practice, the Series2NX requires as little as 25% of the bandwidth compared with competing solutions. Moving from 8-bit down to 4-bit precision for those use cases where it is appropriate enables the Series2NX to consume 69% of the power with less than a 1% drop in accuracy.

Leading-edge performance

In terms of raw performance, the PowerVR Series2NX is also class-leading. Recently a smartphone manufacturer announced that its hardware used to enable face detection for unlocking the phone offered 600 billion operations per second. A single core of our initial PowerVR Series2NX IP, running at a conservative 800MHz, can offer up to 2048 MACs/cycle (the industry standard performance indicator), meaning we can run over 3.2 trillion operations a second – twice our nearest competitor. The Series2NX is a highly scalable solution, and through the use of multiple cores, much higher performance can be achieved, if required. As they say in football, it’s men against boys.

A demonstration of the PowerVR Series2NX NNA in action.

The Series2NX is powerful despite the fact that it is a very low area component, delivering the highest inference/mm2 in the industry. In fact, if used together in a typical SoC, our PowerVR GPU and NNA solution will take up less silicon space than a competitor GPU on its own. Of course, a GPU is not required to use the Series2NX; and a CPU is needed only for the drivers.

Our Series2NX IP is also available with an optional memory management unit (MMU), enabling it to be used with Android or other modern complex OSs, without the need to lay down any additional silicon or for any complex software integration.

Wide support for network types, models, frameworks and APIs

Neural networks come in a variety of flavours, and the choice of which to use will depend on the task in hand. Many types are supported by the Series2NX NNA, including convolutional neural networks (CNNs), multilayer perceptron (MLP), recurrent neural networks (RNNs) and single shot detector (SSD). At launch, the Series2NX supports the major neural network frameworks, including Caffe and TensorFlow and support for others will be investigated on an ongoing basis.

Using the optimised conversion and tuning tools we provide, coupled with the Imagination Deep Neural Network (DNN) API, developers can easily deploy networks from their chosen framework to run on the PowerVR NNA. PowerVR also has a long history of Android support, and the Series2NX will support Android when Google releases a neural network API for it.

Developers can easily prototype their apps by using existing desktop workflows and then use the Imagination DNN API to port them onto the Series2NX and enjoy the significant application speedup and power reduction.

Conclusion

As our world becomes ever more expectant of computers and devices having a greater understanding of the world, the PowerVR Series2NX NNA represents an inflection point in neural network acceleration and performance. With the highest inferences/milliwatt and the highest inferences/mm2, in the industry, it’s the only IP solution that can meet the requirements for deploying neural networks within the power and performance constraints of mobile hardware. Support for all major networks and frameworks, combined with our Imagination DNN API, makes the PowerVR Series2NX NNA the ideal solution to drive the neural network applications of the future.